Work and Learning in Context

A systems-level perspective and Five Context Layers for reframing work with humans and AI.

The Modern Cyclopes (Iron Rolling Mill), Adolph von Menzel, 1875; Commons Public Domain

This article is written in response to two reports published in March 2026: Anthropic’s 2026 Economic Index: Learning Curves and Google’s Agentic AI and the Next Intelligence Explosion. Both arrived at the similar conclusions from somewhat different angles: that the competitive advantage of the AI era is not access to tools, but the quality of context in which humans and AI work together. Anthropic documented that experienced users outperform newcomers not because of more training, but because of deeper contextual fluency developed through situated, repeated engagement with real work. Google’s team—partnering with the Berggruen Institute—argued that intelligence itself in both AI systems and human organizations emerges not from individual processing power but from the richness of the social and institutional context surrounding it. Context, in other words, is not a learning design preference. Context is the mechanism through which both human and artificial intelligence become capable, and the variable that most organizations have not yet learned to design for as Content remains the modern cyclopes.

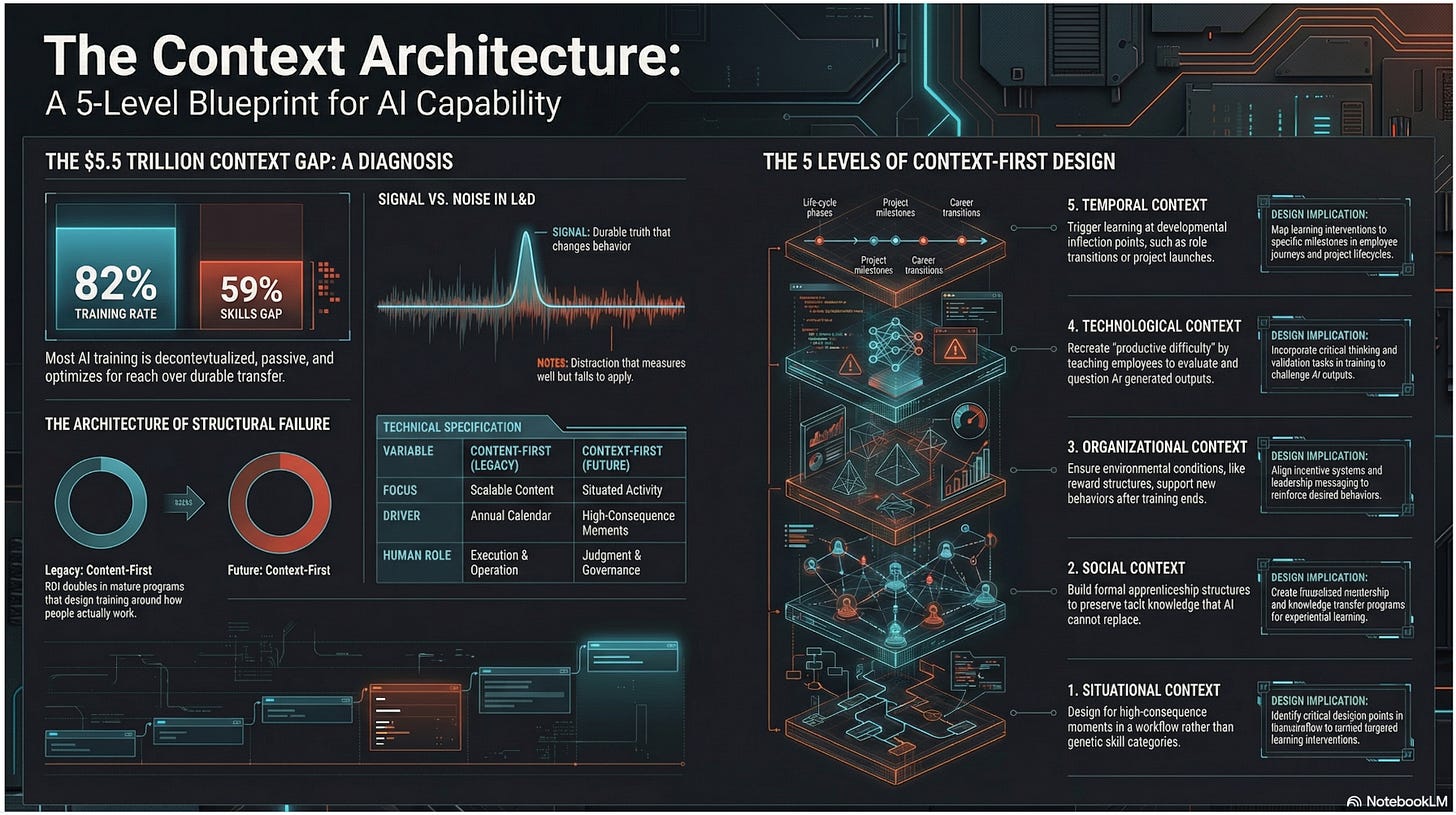

The numbers are now too consistent to dismiss. In DataCamp and YouGov’s 2026 research Why Most AI Training Isn’t Translating to Workforce Capability, more than 500 enterprise leaders across the United States and United Kingdom found that 82% of organizations provide some form of AI training, yet 59% still report a significant AI skills gap. IDC estimates that skills shortages will cost the global economy up to $5.5 trillion in 2026 alone, in product delays, quality deficits, missed revenue, and eroded competitiveness with over 90% of global enterprises projected to face critical AI skills shortages this year. Meanwhile, Anthropic’s recent March 2026 Economic Index reports that AI is now involved in at least a quarter of tasks across nearly half of all jobs in their sample.

The training exists. The tools exist, in abundance. Yet the gap persists.

When a performance problem this large coexists with investment this substantial, the diagnosis is rarely that organizations need more of what they are already doing. It is almost always that they are doing the right things in the wrong order, or optimizing the wrong variable entirely.

In the case of enterprise learning and AI readiness, the current variable being optimized is content.

The variable that actually determines whether learning transfers is context.

And I believe most organizations have no systematic way of designing for it.

This article makes a precise argument: the AI skills gap is, at its root, a context gap. Closing it requires a fundamentally different architecture for how organizations think about learning; one that treats context not as a delivery setting but as THE primary condition of capability development. And it requires, more broadly, a willingness to redesign how work itself is structured for humans and AI together, not to layer AI onto old processes, but to rebuild the system around what each does best.

The Evidence of a Structural Failure

The DataCamp findings are not merely a snapshot. They reveal a structural pattern. Of the leaders surveyed, only 35% report having a mature, organization-wide AI upskilling program. The most common training format (online-based learning combined with instructor-led sessions) is exactly the format that cognitive science has shown to be the least effective at producing durable capability.[1] It is often event-driven, decontextualized, and passive. It optimizes for reach and convenience, not for how memory consolidates and skills transfer.

Scaled training means the reach must be wide to gain the broadest effect, which means training at the lowest common denominator across diverse roles to gain that scale. What we don’t want is to build average workers in bulk.

And the consequence and opportunity are both measurable.

Among organizations with a mature, structured, workforce-wide upskilling program, reports of significant positive AI ROI nearly double. Among those without one, almost no one reports significant returns. The difference between organizations generating AI ROI and those generating expensive activity is not the quality of their AI tools. It is the presence or absence of a prescriptive capability-building system; one designed around how people actually learn, in conditions that are actual to their work. [1]

IDC identifies the same gap from the demand side. 94% of CEOs and CHROs rank AI as their top in-demand skill for 2025, yet only 35% feel they have prepared employees effectively for AI roles. The barriers they cite are telling: lack of talent (46%), unclear ROI on AI programs (26%), and most revealing, fragmented, inconsistent skills development that leaves 40% of IT leaders unable to measure true AI readiness.

Organizations know they have a problem. They cannot measure it. They cannot locate it precisely. There is no proven map. And the training they are providing is not closing it. These are all symptoms of a gap, not a content gap, a context gap.

McKinsey’s Superagency in the Workplace report drawing on surveys of more than 3,600 employees and 238 C-suite executives across six countries adds a dimension that L&D leaders cannot afford to ignore: employees are three times more likely than their leaders expect to be using AI for at least 30% of their daily work, yet 48% of those employees rank training as the single most important factor for broader adoption, and nearly half report receiving minimal or no training.[14] Despite 92% of companies planning to increase AI investment in the next three years, only 1% describe their organizations as mature in AI deployment, meaning AI is fully integrated into workflows and driving substantial business outcomes.[14]

The ambition is there. The architecture that translates ambition into capability is not.

Organizations who are breaking through share one characteristic: they have moved beyond deploying AI tools into existing workflows and begun redesigning those workflows around what humans and AI do best, respectively. BCG calls this “Reshape” as opposed to “Deploy” — and the organizations in Reshape mode see employees saving significantly more time, making sharper decisions, and working on more strategic tasks.[15]

The difference is not the technology. It is the organizational context in which the technology operates.

And more recently, Anthropic’s Economic Index adds a learning curve dimension that L&D leaders have not yet fully absorbed. High-tenure users of AI have developed habits and strategies that allow them to better harness AI’s capabilities, that is they attempt higher-value tasks and are significantly more likely to elicit successful responses. The platform advantage scales with experience. In other words, AI capability is not evenly distributed by training; it ACCRUES through situated, repeated engagement with real problems in real contexts. That is not what most corporate AI training programs are delivering.

What Context in Work and Learning Actually Means

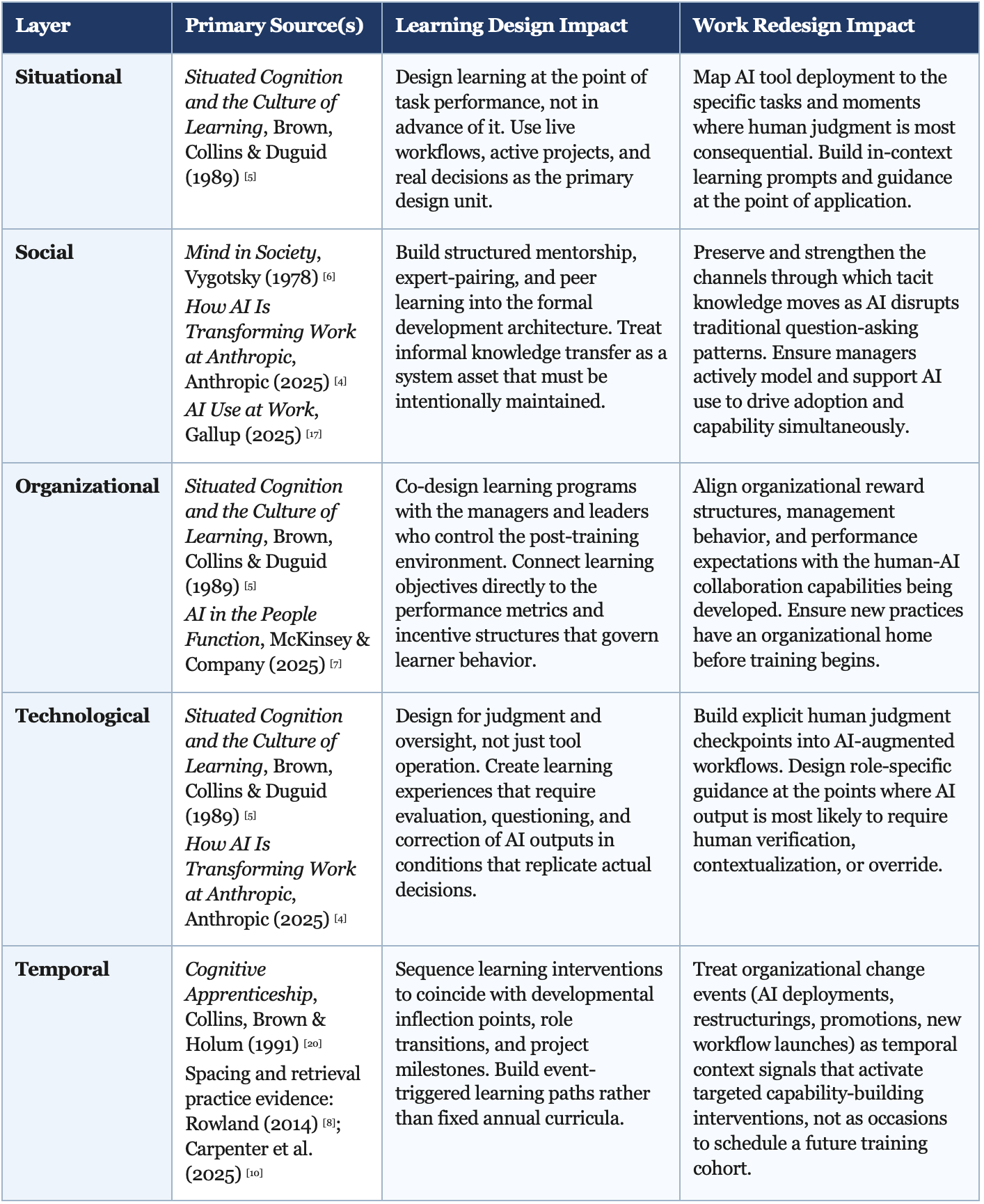

The word “context” is used frequently in learning and talent conversations, but rarely with the precision AI transformation requires. Role, level, business need, third-party skills catalogues, and standard job architectures are typically the extent of essential considerations. These are demographic proxies, persona preferences, not design variables. A more rigorous, modern definition of Context—one that corresponds to how cognitive science understands the relationship between environment and learning—operates at five distinct layers. Each layer is grounded in a distinct body of research. Together, they constitute a practitioner framework for context-first learning and work design.

Before examining each layer, it is worth clarifying two classification used throughout: signal and noise. For L&D, Signal represents a timeless, durable truth or intervention that genuinely changes capability, behavior, and performance. For example, practicing what you learn is always a good thing. Learning with a buddy is a good thing. Noise is everything else. Distraction. The training that may feel productive, measures well on an LMS completion dashboard, yet may not actually apply to the realities of the work, workers and workplace. The central challenge for any learning leader is not a shortage of content. It is the inability to separate signal from noise.

Each layer below identifies what the signal looks like in practice, what noise typically displaces it, and the design implications for organizations redefining work alongside AI.

Situational Context

Grounded in Brown, Collins, and Duguid’s landmark 1989 paper Situated Cognition and the Culture of Learning, which argued that knowledge is not an independent object to be transferred but is co-produced by activity and situation. The immediate task environment is where learning design has the highest leverage.

It is Within The Moment. Specifically: what challenge is this person facing right now, and what decision do they need to make? Learning that arrives at this layer produces the strongest transfer, because acquisition and application are simultaneous.

This insight extends beyond human learning. As shared in Agentic AI and the Next Intelligence Explosion, Google researchers find that frontier AI reasoning models such as ChatGPT or Perplexity do not improve simply by “thinking longer.” Instead, they simulate internal “societies of thought”. That is, spontaneous contextual debates that argue, verify, and reconcile to solve complex tasks.[21] The mechanism is structurally identical to what Lev Vygotsky described for human learning in his book Mind in Society: capability is not a product of individual processing alone, but of the quality of social, deliberative exchange. For L&D, this is not a metaphor. It is a design specification.

The social architecture that produces capable AI reasoning is the same architecture that enhances human judgment.

Consider two employees completing the same risk modeling course:

Signal: A supply chain manager begins a training module on probabilistic risk modeling, which requires her to interview teammates and gather real-life cases studies. She is able to encode and apply that knowledge differently than a peer completing an off-the-shelf module based on industry best practices. The situational context transforms what the learning produces, converting relevant information into applied judgment.

Noise: The default assumption that learning should be highly-scalable, scheduled in advance, delivered uniformly, and measured by completion is the primary structural barrier to situational context. Annual curriculum calendars and fixed course catalogs institutionalize this separation at scale, ensuring that most learning investment arrives at the wrong moment.

Design implication: The design unit should include the high-consequence moments in a role or workflow, not the skill category or the topic cluster. Content, sequence, and delivery format should follow from identifying when and where capability most urgently matters.

Social Context

Grounded in Vygotsky’s foundational work in developmental psychology, which established that human development occurs in the process of ongoing social transactions, not through isolated individual processing. Tacit knowledge, or the judgment, the craft, the accumulated read of organizational situations that distinguishes an experienced practitioner from a technically qualified one, does not typicaly live in content libraries. It lives in the social context of a working team, moving through observation, conversation, feedback, and the informal apprenticeship that most organizations have never systematically designed for. [6]

Example: When an experienced sales negotiator walks a junior colleague through a live pricing call, the learning happening is invisible to any LMS, and typically irreplaceable by any course.

The Signal: The experienced sales director explains reasoning in real time. The junior manager is learning how to think through ambiguity in the moment, read organizational dynamics etc., none of which most negotiation courses (synchronous or asynchronous) contain. Related, Gallup finds that employees with strong manager support for AI are more than twice as likely to use it frequently and twice as likely to believe it helps them perform well.[17]

The Noise: A negotiation skills course may be competent in content, appropriate in format, yet largely disconnected from the relational conditions in which negotiation skill actually develops. When AI becomes the default for questions that previously went to senior colleagues, this social learning channel closes quietly, leaving formal training to carry a load it was never designed for. Only 30% of workers report having manager support for AI, and only 22% of organizations have communicated a clear AI plan.[17]

Design implications: Structured mentorship, deliberate pairing of junior staff with expert practitioners, or, deliberate pairing of execs with younger experts, and rewarding leadership practices that REQUIRE knowledge transmission must be a designed as a formality, not treated as organic or informal behavior that will emerge on its own.

Organizational Context

Supported by Brown, Collins, and Duguid’s assertion that knowledge is situated in the social, cultural, and physical contexts in which it is produced and used, and reinforced for example by McKinsey’s People function research on the necessity of embedding learning into the flow of daily work. [5] [7] This layer also demands that a learned capability can be practiced within or after training ends. It is the layer where most formal learning investments quietly fail, not in the design of the program, but in the absence of the environmental conditions that would allow a learned behavior to survive contact with the organization.

A manager returns from a three-day leadership program energized and equipped, only to find that nothing about her team’s reporting structure, review cycles, or behavioral expectations has changed. The environment wins.

Signal: Learning interventions are co-designed with business unit leaders, reinforced through visible managerial behavior(s) (that is, walking the talk), and ideally connected to the activities that govern the learner’s daily decisions. McKinsey’s own L&D practice now embeds learning, coaching, and personalized feedback directly into daily work explicitly because the organizational context of work must be the learning environment, not a separate venue that competes with it.

Noise: The post-training cliff. The inability to apply what was learned dilutes the training “event” not because the learning was poor but because the organizational context never changed to support it. Versus, programs designed with intentional post-training reinforcement produce high satisfaction scores and low behavioral transfer rates.

Implication: Organizational alignment with what’s being learned must be a precondition of program design, not an afterthought. New capabilities also require reward structures that signal their value. More so, transparency of management not protecting learning time and not ensuring post-learning support should be measured, made visible, and called out.

The Google researchers offer a direct parallel at the systems level. Evans and team argue that scaling AI intelligence requires shifting from dyadic alignment (the dominant model in which a single AI is corrected by a single human) toward institutional alignment: designing the protocols, checks, and governance structures through which hybrid human-AI systems regulate themselves.[21] Training individual workers via the institutional approach to use AI more effectively is a dyadic model. Redesigning the organizational conditions including the incentives, the norms, the management practices, the workflow architecture so that contextual capability develops through the system rather than an institutionalized model.

The organizations that will generate durable AI ROI are not the ones that trained the most individuals and measure success by completions or the number of individual badges and credentials. They are the ones that built the institutional context in which continuous learning is structurally guaranteed.

Technological Context

Tools do not merely support cognition but fundamentally reshape it, as again strengthened by Anthropic’s research.

“When the tools with which people work include AI agents capable of performing increasingly complex cognitive tasks autonomously, the human learning challenge shifts from execution to judgment, from skill in task performance to skill in task direction, evaluation, and oversight.” [5]

Without doubt, this is the layer moving fastest, and the one for which I believe most existing learning programs are most influenced by, yet least prepared.

The Google and Berggruen Institute team’s concept of the “human-AI centaur” makes the design implication unavoidable. They describe human-AI centaurs as composite actors—neither purely human nor purely machine—where each person may inhabit diverse ensembles many times a day: one human directing many AI agents, one AI serving many humans, many humans and AIs collaborating in shifting configurations. The workforce capability that organizations need to develop is not fluency with a tool. It is the judgment and contextual literacy required to function as the human half of a centaur, which means the design target for learning is a role that does not yet appear on any job description. [21]

Example: An analyst completes an AI tools course, returns to work and faces a financial model generated by Claude with an error embedded three levels deep. Nothing in the course prepared her to find it.

Our Signal: AI-assisted workflows must include explicit augmented checkpoints for human judgment, structured after-action reviews of AI outputs, and role-based learning that teaches people to evaluate and direct AI rather than merely operate it.

The Noise: The AI awareness module that Shows employees what a large language model is, how it works, and what the company’s usage policy says, leaves them entirely unprepared unless hands-on trial and error is built in.

For learning designers: Learning programs must deliberately recreate the friction that AI removes. Employees need repeated exposure to situations that require them to specify, evaluate, question, and correct AI-generated work in conditions that replicate the actual decisions they face.

And, Temporal Context

Supported by Collins, Brown, and Holum’s Making Thinking Visible, which emphasizes that learning sequences should be structured around developmental readiness and exposure to progressively complex challenges, not by a calendar. The chronological dimension of learning—where the learner sits in a fortunate arc in their career, their tenure, their acomplished projects, or their organization’s change journey—is one of the most under-designed variables in enterprise L&D. The same intervention lands differently depending on when it arrives. [20]

The same change management curriculum lands entirely different for a first-time leader than for someone who already has experienced three org restructures.

Signal: Learning arrives at developmental inflection points. The new manager whose first direct report is already struggling. The senior leader whose organization has just committed to an AI-first workflow redesign. The analyst who has just been handed a generative AI-assisted deliverable for the first time. These moments create receptivity, urgency, and the memory consolidation that spaced learning science predicts.[8,10]

Noise is… The fixed annual L&D calendar. The “forced” pressure to complete your learning hours. The allocation of development to one quarter. Etc. This delivers the “right” content, yet unfortunately to people who are not yet in a situation to use it, or too late to change what has already occurred.

Design implication: Key learning triggers should be event-driven, not calendar-driven. Role transitions, project launches, AI deployments, team restructurings, and promotion decisions are all temporal context signals that should activate targeted, just-in-time learning interventions and not wait for the next scheduled cohort.

Summary:

Created with Claude Sonnet 4.6

A Context-First Architecture for L&D

Reorienting enterprise learning around context is not a regular program redesign. It is an architectural, systems and ways-of-working decision, one that changes what L&D measures, what it builds, how it operates, and where it invests. And it is inseparable from a more fundamental question: what is work now, next, and how must it be reframed for humans and AI to operate together effectively?

The World Economic Forum’s Future of Jobs Report 2025, drawing on data from more than 1,000 companies across 55 economies, projects that by 2030, the share of tasks performed exclusively by humans will fall from 47% today to roughly 33%, with human-machine collaboration rising to fill much of that space. Sixty-three percent of employers already identify the skills gap as the single biggest barrier to their business transformation; not technology access, not budget, but human capability.[16] These are not distant projections. They are describing a structural shift in the conditions of work that is already underway and that most enterprise learning strategies were not designed to address.

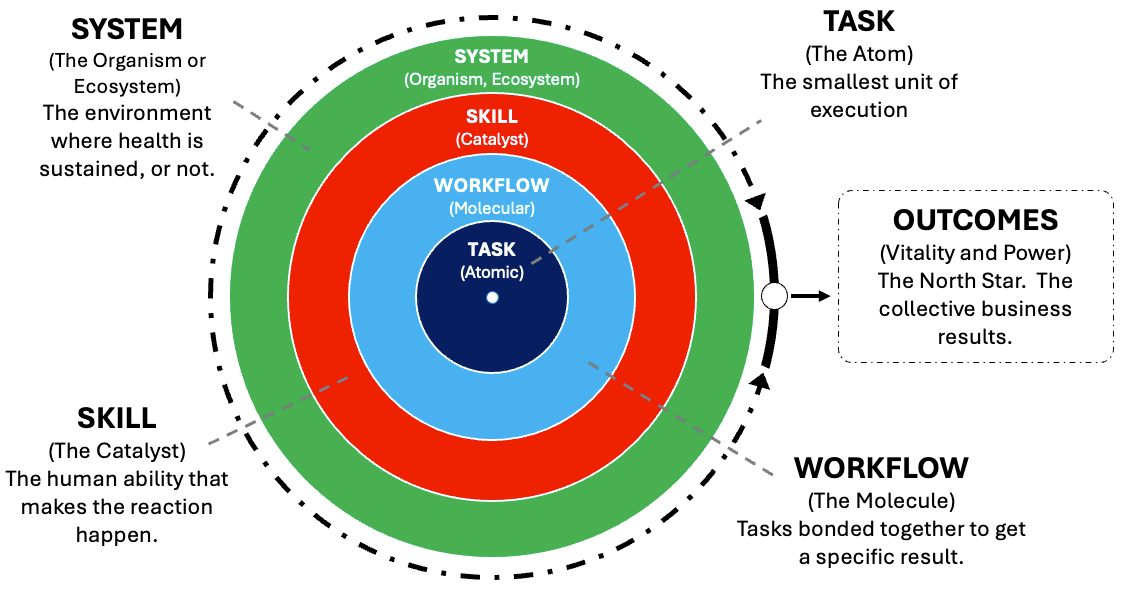

This is similar to the systems-level diagnosis in my workforce transformation Substack series The Purpose Is People: A Playbook for Reframing Modern Work. Most organizations are engaging in what it terms “consensus theater”, layering AI on top of broken processes to satisfy stakeholders without genuinely redesigning how work gets done.[19] The alternative unit of analysis the series proposes is not the job, but an atomic hierarchy of work: tasks, workflows, skills, and systems, all intentionally leading to outcomes. Each must also be evaluated for what belongs to AI, what belongs to humans, and what emerges from the collaboration between them. [19]

Source: The Purpose Is People, Part 1

AI is Increasingly Making Execution a Commodity

The competitive differentiator is no longer the ability to produce. It is the ability to govern, to specify what is needed, to evaluate what is produced, to direct what happens next, and to take responsibility for the outcome. Governance is an advancing human capability. It is a learned one with teammates (human and AI), and it is exactly what context-first learning is designed to build.

Start with the moments that matter, not the skills that are missing. The conventional L&D design process begins with a skills gap analysis and works toward content that fills the gap. A context-first design process begins with the specific moments in a role where capability or its absence has the highest consequence. These moments become the design units. Content, sequencing, and delivery all follow from them. This is a fundamentally different brief, and it requires refreshed partnerships with business leaders, process owners, and frontline managers that most L&D functions are not currently sustaining.

Rebuild the apprenticeship infrastructure before it disappears. The informal learning system or the social context layer is under active pressure from AI adoption. When AI becomes the default first source for questions that previously went to colleagues, the relational channel through which tacit knowledge, professional judgment, and organizational wisdom travel begins to close.[4] Organizations need to design for this deliberately, through structured mentoring programs, through deliberate rotation of junior employees into contexts where expert judgment is visible and discussable, through leadership practices that reward knowledge transmission as a valued activity, not an inefficiency.

Develop contextual fluency as a distinct and measurable capability. The Axios analysis of the emerging AI proficiency divide identifies the core skill precisely: the ability to construct, communicate, and manage context for AI systems, for colleagues, and for one’s own decision-making.[12] This is not the same as AI tool proficiency. A user who can operate an AI tool is not necessarily a user who can give that tool enough contextual precision to produce a trustworthy output. Developing this capability requires situated practice, that is, repeated exposure to situations that require learners to specify what they know, what they need, what constraints apply, and how to evaluate whether the result is adequate. BCG’s finding that regular AI usage is sharply higher among employees who have access to hands-on, experimentation- and context-heavy training and coaching. which underscores my prior emphasis: the relational and situational conditions of learning determine whether contextual fluency develops or stalls.

Redesign measurement from activity to capability. IDC’s warning that 40% of IT leaders cannot measure true AI readiness because of fragmented, inconsistent skills data is a measurement architecture problem with a known solution I’ll state loudly: CONNECT LEARNING DATA WITH WORK DATA. The signal of a working context-first L&D system is not completion rates. As additionally shared in my Substack, unless prime adherence of data interoperability is deployed [see Emerging AI-Optimized Tech and Tools], this connection will not occur with accuracy and relevance. Organizations that are generating AI ROI have built this connective tissue. They can see where performance gaps are emerging in actual workflows, and they design learning interventions that are targeted to those gaps rather than delivered by calendar. The architecture must be dynamic, continuous, and context aware.

Protect productive difficulty. The organizational instinct during AI deployment is to reduce friction everywhere. In learning, this instinct is dangerous. Spaced repetition, retrieval practice, interleaving, stretch assignments—all of the Signaling Mechanisms that produce durable capability—require effort that feels harder than passive exposure. [8,9,10] This is not a design flaw. It is the mechanism. L&D leaders must make the case, with evidence, for maintaining the difficulty and challenge that drives deep learning, even as AI makes surface-level performance cheaper and easier to simulate. The organizations that confuse fluent performance with durable competence will discover the difference when the situation changes and the underlying capability is not there.

Source: The Execution Bias

The Learning Curve Is the Competitive Divide

Anthropic’s March 2026 Economic Index report titled, pointedly, “Learning Curves”, provides the most precise empirical account yet of what context-driven capability development looks like at scale. The report’s central finding is that high-tenure Claude users have a 10% higher success rate in their AI conversations than newer users; an advantage that cannot be explained by task selection, country of origin, or other observable factors.

“Higher tenure, higher success. In general, the most seasoned Claude users employ it more often for higher-education tasks and less often for personal use cases. For example, people who have been using Claude for 6 months or more have 10% fewer personal conversations and a 6% higher education level reflected in their inputs. Most strikingly, people in this higher-tenure group have a 10% higher success rate in their conversations, an association that is not explained by their task selection, country of origin, or other factors. While this could reflect sophistication of early adopters, it could also be evidence of learning-by-doing, where people get better at using Claude through experience.” [3]

The hopeful evidence of “learning-by-doing” is a pattern in which people get better at working with AI through contextually situated, repeated experience. That phrase is not a learning science metaphor. It is a direct description of what context-first learning design is built to produce.

The report raises an important corollary: if effective AI use requires complementary skills and expertise, “and if such skills can be acquired through use and experimentation, then the benefits from early adoption may be self-reinforcing.”

Early adopters are building competitive, contextual fluency.

Contextual fluency unlocks higher-value work. Higher-value work accelerates further development. Organizations that have not yet designed the conditions for this cycle to begin—Situationally, Socially, Organizationally, Technologically, and Temporally—are not just behind on training. They are falling behind on a compounding curve.

Axios’s March 2026 analysis named this dynamic clearly: the divide is not between those who use AI and those who do not. It is between experienced users who have developed the contextual fluency to direct AI toward high-value outcomes and casual users who have not. This divide is already creating new forms of economic inequality at the level of individuals, teams, and organizations. [12]

As shared in Google’s report, the researchers close with a line that may be the most concise statement of what this article has argued across its entire length: “The question is not whether intelligence will become radically more powerful, but whether we will build the social infrastructure worthy of what it is becoming.” [21]

The social infrastructure of an organization’s intelligence is its Learning Architecture: the conditions under which people encounter hard problems, calibrate against expert judgment, receive feedback in the moments that matter, and return to prior learning in contexts that make it stick. That is precisely what the five layers of context are designed to build.

Similarly, the Anthropic data reframes the mission with unusual precision. The question is not how to give more people access to AI tools. Nearly half of all jobs already have at least a quarter of their tasks touched by Claude.[3] The question is how to rewire the organizational conditions where the learning curve runs in your organization’s favor. Where it’s okay to dive into the deep end, protected by curiosity, challenge and a culture built on courage.

The skills gap is large, and the compounding effects are already visible.

What closes the gap is not more content and not more courses. This is L&D’s current, myopic cyclopes.

It is the context in which people encounter, apply, and return to the capabilities that our AI opportunity and competitive conditions actually require.

This article was written and formatted with Claude Sonnet 4.6, with additional research via Gemini 3, and ChatGPT. Google’s NotebookLM provided ongoing challenge, and crafted visuals. AI’s spontaneous debate helps when context is not clear, and when verification is required.

The views and opinions expressed in this article are solely those of the author and do not necessarily reflect the views of the author’s employer or any affiliated organizations.

Licensing

Unless otherwise noted, the contents of this series are licensed under the Creative Commons Attribution 4.0 International license; CC0 1.0 Universal (CC0 1.0) Public Domain Dedication.

Should you choose to exercise any of the 5R permissions granted you under the Creative Commons Attribution 4.0 license, please attribute me in accordance with CC’s best practices for attribution.

The opening image The Modern Cyclopes (Iron Rolling Mill), Adolph von Menzel, 1875; is in Commons Public Domain

If you would like to attribute me differently or use my work under different terms, contact me at https://www.linkedin.com/in/marcsramos/.

Additional

Source: Google NotebookLM

References

[1] Cornelissen, J., & Heidmann, L. (2026). 2026 state of data & AI literacy report. DataCamp. https://www.datacamp.com/blog/the-ai-skills-gap-in-2026-why-most-ai-training-isn-t-translating-to-workforce-capability

[2] Workera. (2026). The $5.5 trillion skills gap: What IDC’s new report reveals about AI workforce readiness. Workera. https://workera.ai/blog/the-5-5-trillion-skills-gap-what-idcs-new-report-reveals-about-ai-workforce-readiness

[3] Anthropic. (2026, March). Economic index: Learning curves. Anthropic. https://www.anthropic.com/research/economic-index-march-2026-report

[4] Anthropic. (2025, December). How AI is transforming work at Anthropic. Anthropic. https://www.anthropic.com/research/how-ai-is-transforming-work-at-anthropic

[5] Brown, J. S., Collins, A., & Duguid, P. (1989). Situated cognition and the culture of learning. Educational Researcher, 18(1), 32–42. https://doi.org/10.3102/0013189X018001032

[6] Vygotsky, L. S. (1978). Mind in society: The development of higher psychological processes. Harvard University Press. https://www.hup.harvard.edu/books/9780674576292

[7] McKinsey & Company. (2025, November). AI in the people function: Building leaders, improving processes, creating value. McKinsey & Company. https://www.mckinsey.com/featured-insights/people-in-progress/ai-in-the-people-function

[8] Rowland, C. A. (2014). The effect of testing versus restudy on retention: A meta-analytic review of the testing effect. Psychological Bulletin, 140(6), 1432–1463. https://doi.org/10.1037/a0037559

[9] Adesope, O. O., Trevisan, D. A., & Sundararajan, N. (2017). Rethinking the use of tests: A meta-analysis of practice testing. Review of Educational Research, 87(3), 659–701. https://doi.org/10.3102/0034654316689306

[10] Carpenter, S. K., Witherby, A. E., & Tauber, S. K. (2025). The distributed practice effect on classroom learning: A meta-analytic review of applied research. Educational Psychology Review, 37(2). https://pmc.ncbi.nlm.nih.gov/articles/PMC12189222/

[11] Butowska, E., Kliś, P., Zawadzka, K., & Hanczakowski, M. (2024). The role of variable retrieval in effective learning. Proceedings of the National Academy of Sciences, 121(46). https://pmc.ncbi.nlm.nih.gov/articles/PMC11536137/

[12] VandeHei, J., & Allen, M. (2026, March 24). Behind the curtain: America’s next class war will be over AI fluency. Axios. https://www.axios.com/2026/03/24/ai-use-inequality-class

[13] PwC. (2025). AI jobs barometer. PricewaterhouseCoopers. https://www.pwc.com/gx/en/news-room/press-releases/2025/ai-linked-to-a-fourfold-increase-in-productivity-growth.html

[14] McKinsey & Company. (2025, January). Superagency in the workplace: Empowering people to unlock AI’s full potential at work. McKinsey Global Institute. https://www.mckinsey.com/capabilities/mckinsey-digital/our-insights/superagency-in-the-workplace-empowering-people-to-unlock-ais-full-potential-at-work

[15] Boston Consulting Group. (2025, June). AI at work 2025: Momentum builds, but gaps remain. BCG. https://www.bcg.com/publications/2025/ai-at-work-momentum-builds-but-gaps-remain

[16] World Economic Forum. (2025, January). The future of jobs report 2025. WEF. https://www.weforum.org/publications/the-future-of-jobs-report-2025/

[17] Gallup. (2025). AI use at work has nearly doubled in the past two years. Gallup. https://www.gallup.com/workplace/701195/frequent-workplace-continued-rise.aspx

[18] Lane, M., Williams, M., & Broecke, S. (2024). AI adoption in the workplace: Skills, productivity, and organizational change. OECD Social, Employment and Migration Working Papers. OECD Publishing. https://www.oecd.org

[19] Ramos, M. (2025, December 15). Part 1: The purpose is people: A playbook for reframing modern work. The Purpose Is People [Substack]. https://open.substack.com/pub/ramosmarcs/p/part-1-the-purpose-is-people-a-playbook

[20] Collins, A., Brown, J. S., & Holum, A. (1991). Cognitive apprenticeship: Making thinking visible. American Educator, 15(3), 6–11, 38–46.

[21] Evans, J., Bratton, B., & Agüera y Arcas, B. (2026). Agentic AI and the next intelligence explosion. Science, 391, eaeg1895. https://doi.org/10.1126/science.aeg1895