Part 1: Choosing Between Cowork or Codex for Your L&D Team

A guide for considering the latest and greatest and Most Durable agentic approach for L&D, deconstructing roles, and leading the inevitable change from vendor-first to build-first.

Credit: Google NotebookLM

Let me describe your L&D team and you tell me if I’m wrong. You’ve got a few six-figure content vendor contracts on auto-renew. An LMS that functions primarily as a compliance tracker. Instructional designers who spend more time in stakeholder meetings than enhancing their Gift of Design. An operations team focused on measuring learning hours and consumption. And your own developers and facilitators helping vendors use unspent opex money at the end of the year so it’s not “wasted”.

And an uncomfortable truth sitting in your chest like an intentionally unread email: that your entire operating model and ways-of-working is built for a version of L&D that’s already obsolete. And everyone on your team knows it even if no one’s said it.

And we wonder why many still treats L&D like a service bureau.

This week’s latest L&D conundrum…

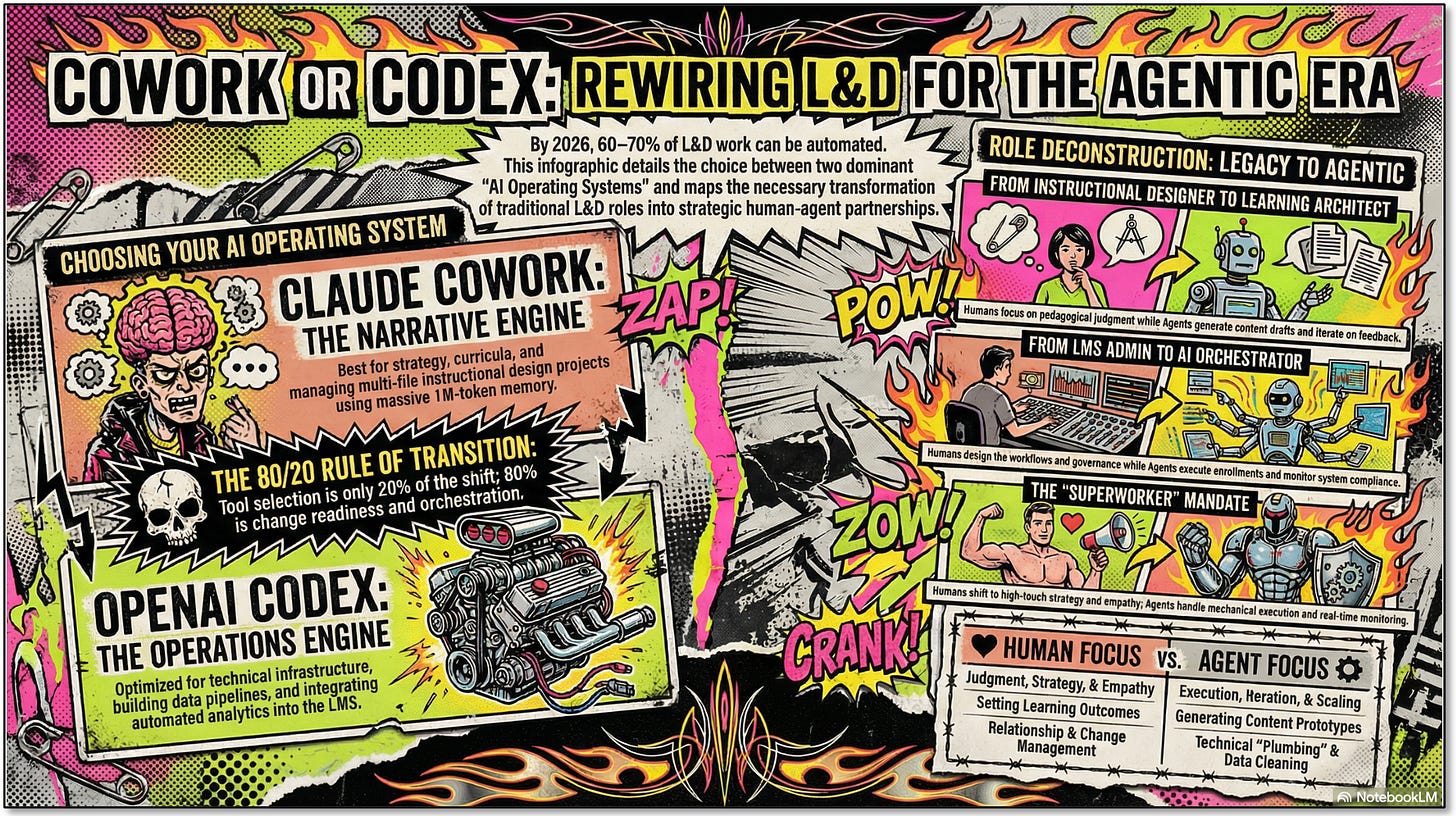

Last month (January 2026), Anthropic launched Claude Cowork: a general-purpose AI agent that can autonomously manage files, draft documents, and execute multi-step workflows on your desktop. [1] Days later, the Cowork plugin launch triggered what analysts called the “SaaSpocalypse,” wiping an estimated $285 billion off enterprise software stocks in a single week. [2]

OpenAI countered with the Codex app and GPT-5.3-Codex, its most capable agentic coding model, now running 25% faster with deeper autonomous workflow capabilities. [3]

These aren’t chatbots. They’re agents. They plan, they act, they iterate, and they’re already reshaping how knowledge work gets done.

The question for L&D leaders isn’t whether to adopt them. For me, it’s which one becomes your L&D team’s Operating Model, literally, and whether we have the change management muscle and mindset agility to actually make the shift. [See Part 2 for more on an LDOM approach.]

The Real Question Isn’t the Tool. It’s the Transition.

Let me be direct: if you’re evaluating the latest and greatest Google’s Gemini-powered ecosystem, Claude Cowork or OpenAI Codex purely as “tools,” you’re already thinking about this wrong. The tool selection is 20% of the decision. The other 80% is whether your L&D organization is ready to stop being a buyer of finished learning products and start being a builder and orchestrator of learning systems.

That is, the 80% = change management, change readiness.

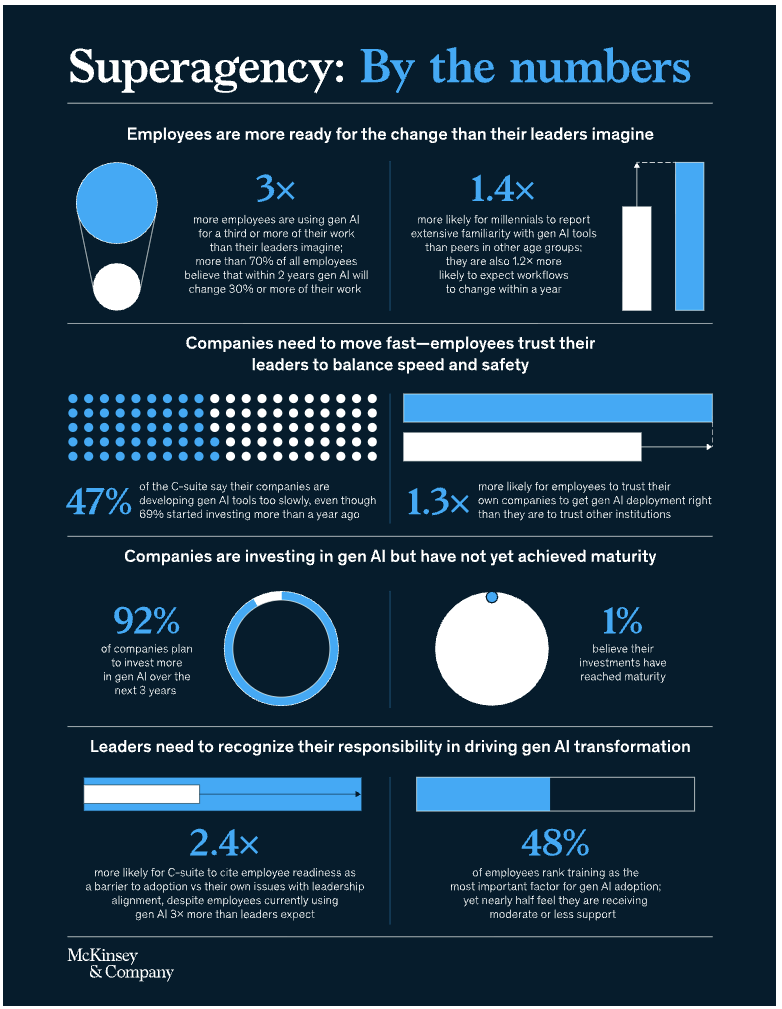

Josh Bersin’s research makes the stakes clear: 60–70% of the work currently done by training and development teams can be automated by AI agents. [4] That’s not a distant forecast. That’s the 2026 imperative. The organizations that thrive won’t be the ones with the best vendor contracts. They’ll be the ones whose L&D teams learned to coordinate humans and agents as a unified system.

McKinsey’s Superagency research found that employees are three times more likely than leaders expect to already be using generative AI for at least 30% of their daily work—yet only 1% of organizations consider themselves AI-mature. [5]

The gap isn’t technological. It’s organizational. And L&D, of all functions, should be leading the redesign, not waiting for IT to hand down a roadmap.

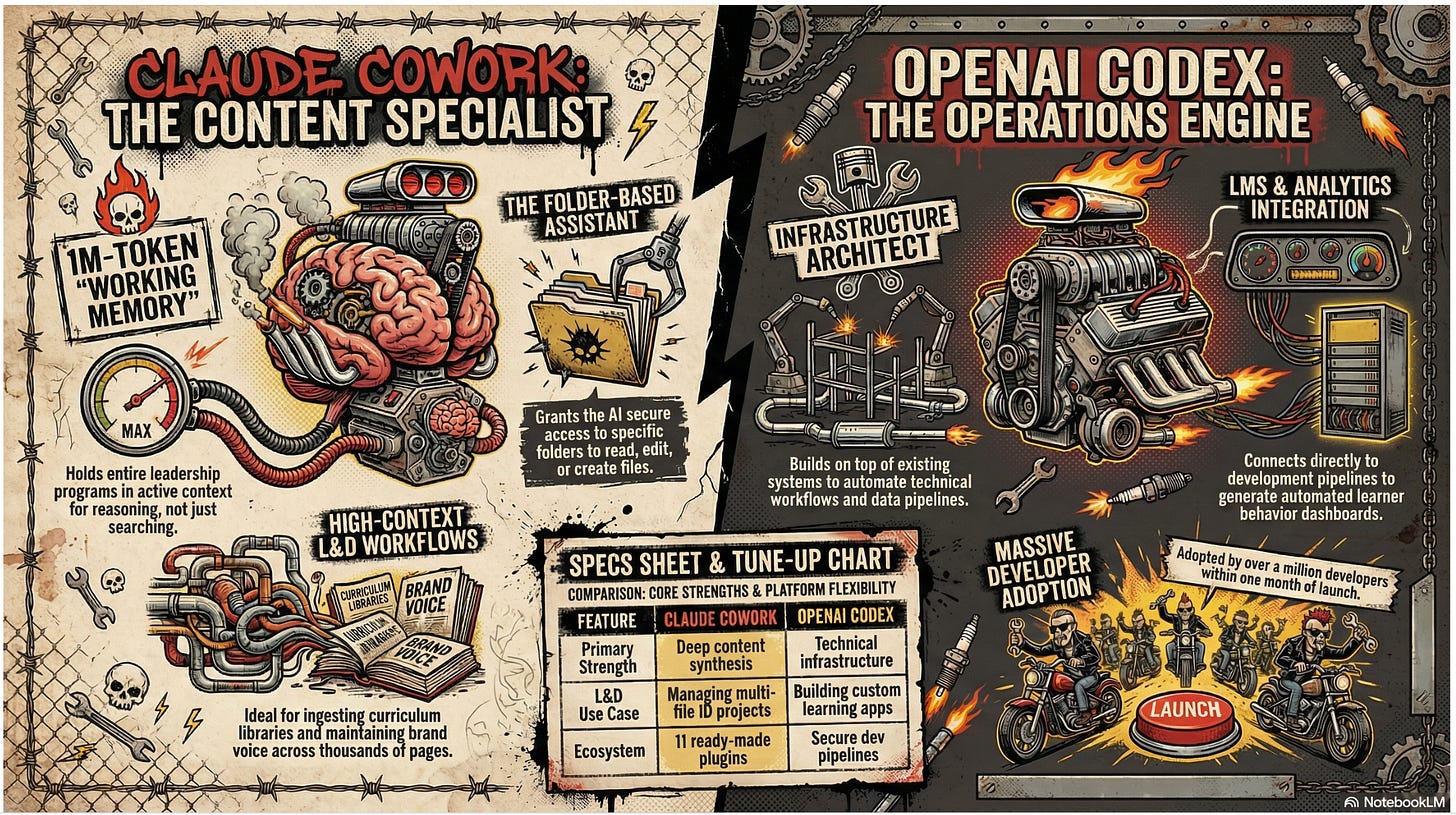

Cowork vs. Codex: What Each Actually Does

For now, I’m reviewing Claude Cowork and OpenAI’s Codex as not just the L&D choice de jour, but a new L&D operating model that can bridge all learning functions, workflows, ways-of-working internally and cross-functionally, and identify change gaps to fill. Both platforms have evolved far beyond their origins as coding tools. Here’s how they map to L&D work in February 2026:

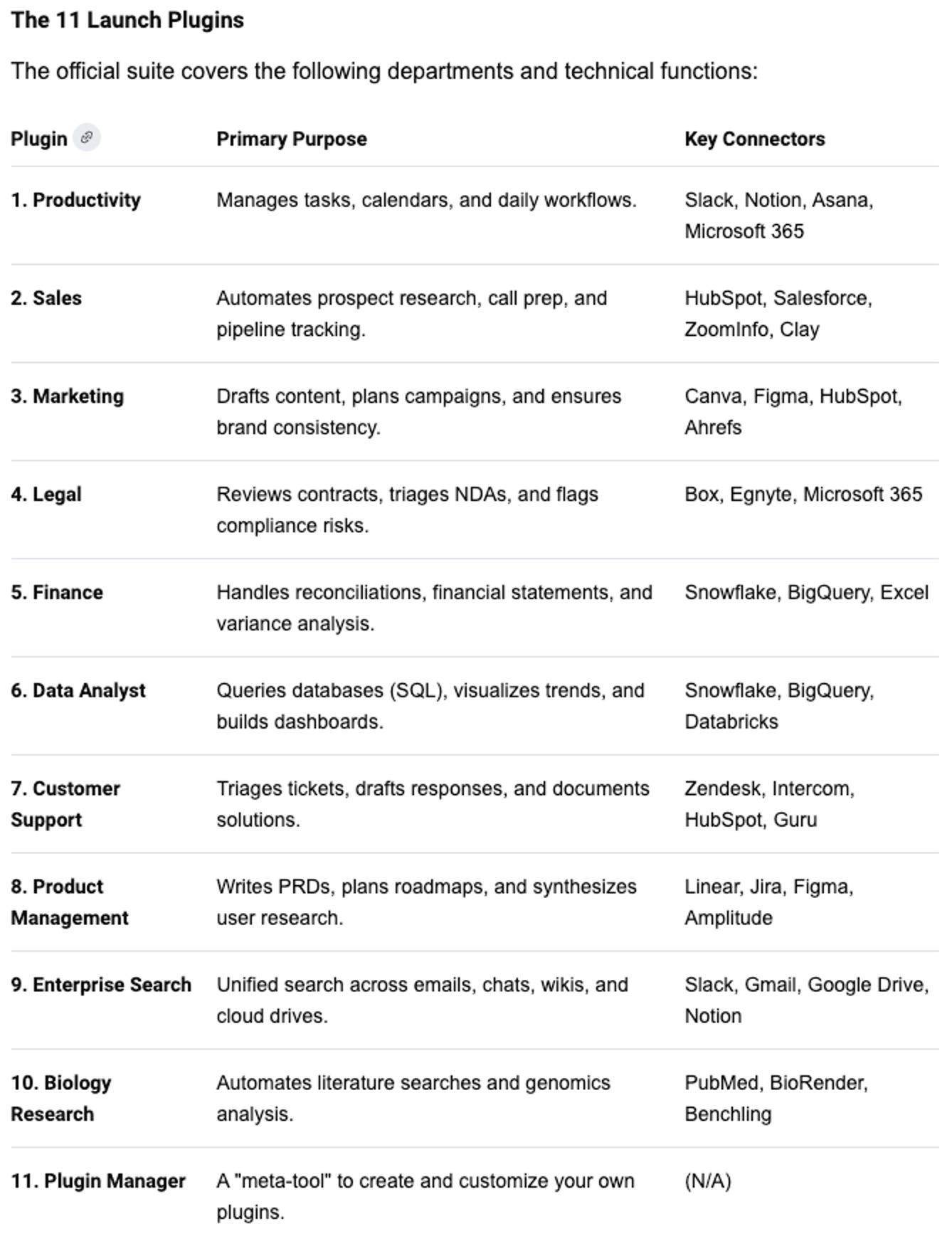

Claude Cowork works like giving an AI assistant access to specific folders on your computer. You decide exactly which files it can read, edit, or create. It operates in a protected environment that keeps your data secure, can work on multiple tasks simultaneously, and connects to 11 ready-made plugins for productivity, enterprise search, marketing, and sales workflows. [1] [See Appendix A for the plugins.]. The plugin system has already been adopted by Microsoft, Cursor, and OpenAI itself, which means any capability built for Cowork works across platforms — no vendor lock-in. [6]

For L&D, this makes Cowork exceptionally strong at work that requires deep context: ingesting entire curriculum libraries, maintaining brand voice across thousand-page document sets, synthesizing (otherwise known for lacking value) Kirkpatrick Level 1 learner feedback with business strategy, and managing multi-file instructional design projects. The 1M-token context window on Opus 4.6 functions as the model’s working memory — the cognitive workspace where it actively holds and reasons across information (Baddeley, 2010) — meaning it can hold your entire leadership development program in active context at once, not just search for pieces of it. [7]

OpenAI Codex is a cloud-based AI agent designed for building and engineering software. It runs tasks in secure, isolated environments preloaded with your existing systems and code. Where Cowork thinks across your content, Codex builds on top of your infrastructure — executing multiple tasks in parallel, automating repetitive technical workflows, and connecting directly to development pipelines. [3] For L&D, Codex is your operations engine: constructing data pipelines, integrating with your LMS, generating automated analytics dashboards that track learner behavior, and building custom learning applications from scratch. Over a million developers adopted Codex within a month of launch. [8]

Source: Google NotebookLM

My Initial Recommendation (as of February, 2026)

Adopt Claude Cowork as the primary platform for the broader L&D team.

Here’s why: the majority of L&D’s transformation challenge is not technical—it’s narrative, strategic, Context Heavy, and human. L&D teams need to redesign programs, synthesize complex organizational context, write and iterate on curricula, manage multi-stakeholder feedback, and produce outputs that require deep reasoning about human behavior change. Cowork’s contextual reasoning, agentic file management, and plugin ecosystem map directly to these needs. Use Codex tactically for the operations and analytics workstreams—building dashboards, integrating data pipelines, constructing feedback bots—where code-native execution gives it a clear edge.

This is not an abstract debate. It’s a portfolio decision. Your Learning Architects use Cowork. Your Learning Ops engineers use Codex. Your team can leverage and coordinates both.

BIG and IMPORTANT caveat. If your enterprise already has an approved, multi-year solution in place (Gemini, OpenAI, Perplexity, Anthropic…), stick with this. Don’t be distracted. Go deep rather than go wide across the many LLM/GPT variations.

With this called out, the L&D org must, still, deconstruct itself.

L&D Must Redesign Itself Before It Can Redesign the Enterprise

This is the uncomfortable truth most L&D functions are avoiding: you cannot credibly lead enterprise AI transformation if your own team is still organized around legacy roles and vendor-dependent workflows.

AI’s real transformation requires demolishing foundational assumptions, not decorating them with new technology.

The real opportunity is shedding yesterday’s logic so we can multiply human and commercial potential, and above all, sustain human interconnectedness.

For this change to happen, L&D, Talent and HR need to lead by example and first redesign themselves.

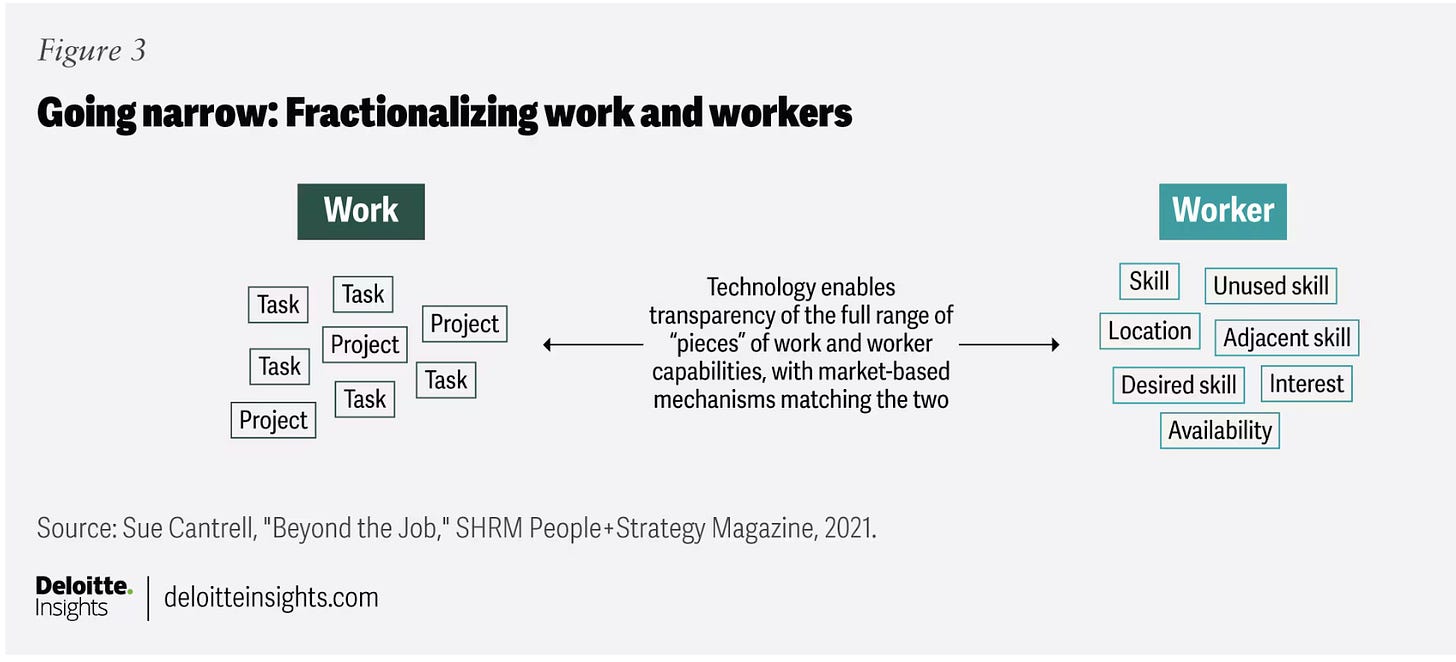

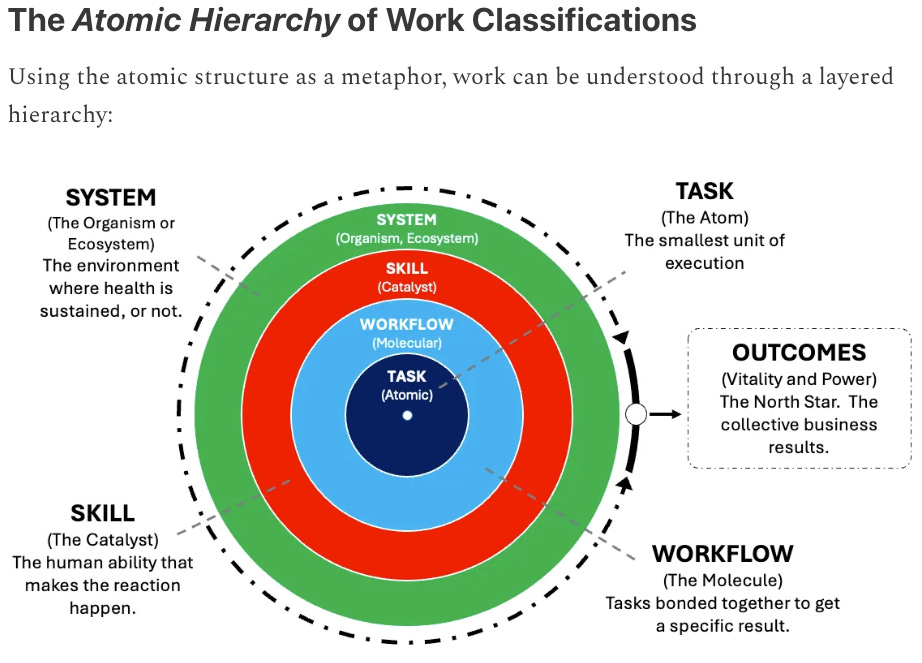

Deloitte’s recent research on work deconstruction argues that organizations must break jobs into tasks, then reconstruct work based on what humans and AI each do best—moving from “jobs” to “skills and outcomes.”

“Interest in task-based workforce planning is growing, driven by the pace of change, automation, gig work, remote models, and advances in natural-language processing to analyze tasks. Disaggregating jobs into tasks makes it easier to rebundle work—some done remotely, some automated, some by external talent, and some even by customers (think self-service kiosk checkout in retail stores). Because tasks are easier to change than jobs or people, organizations gain flexibility to reconfigure work and close talent gaps with greater speed and agility.” [9]

From jobs to skills to outcomes: Rethinking how work gets done

I’ve also written about this a few weeks ago in my series, “The Purpose Is People: A Playbook for Reframing Modern Work”, with a similar deconstruction of work via a Task-Centric “Atomic Hierarchy” of work.

Ravin Jesuthasan’s MIT Sloan analysis identifies five actions: start with the work itself, use AI as a force multiplier, redesign work structures holistically, plan for freed-up capacity, and make work design a core leadership capability. [10] Deloitte is even redesigning its own 181,500-person US workforce with new job titles effective June 2026, replacing the traditional analyst-consultant-manager pyramid with role families that reflect how AI has changed the work. [11]

L&D needs to do the same, and do it first.

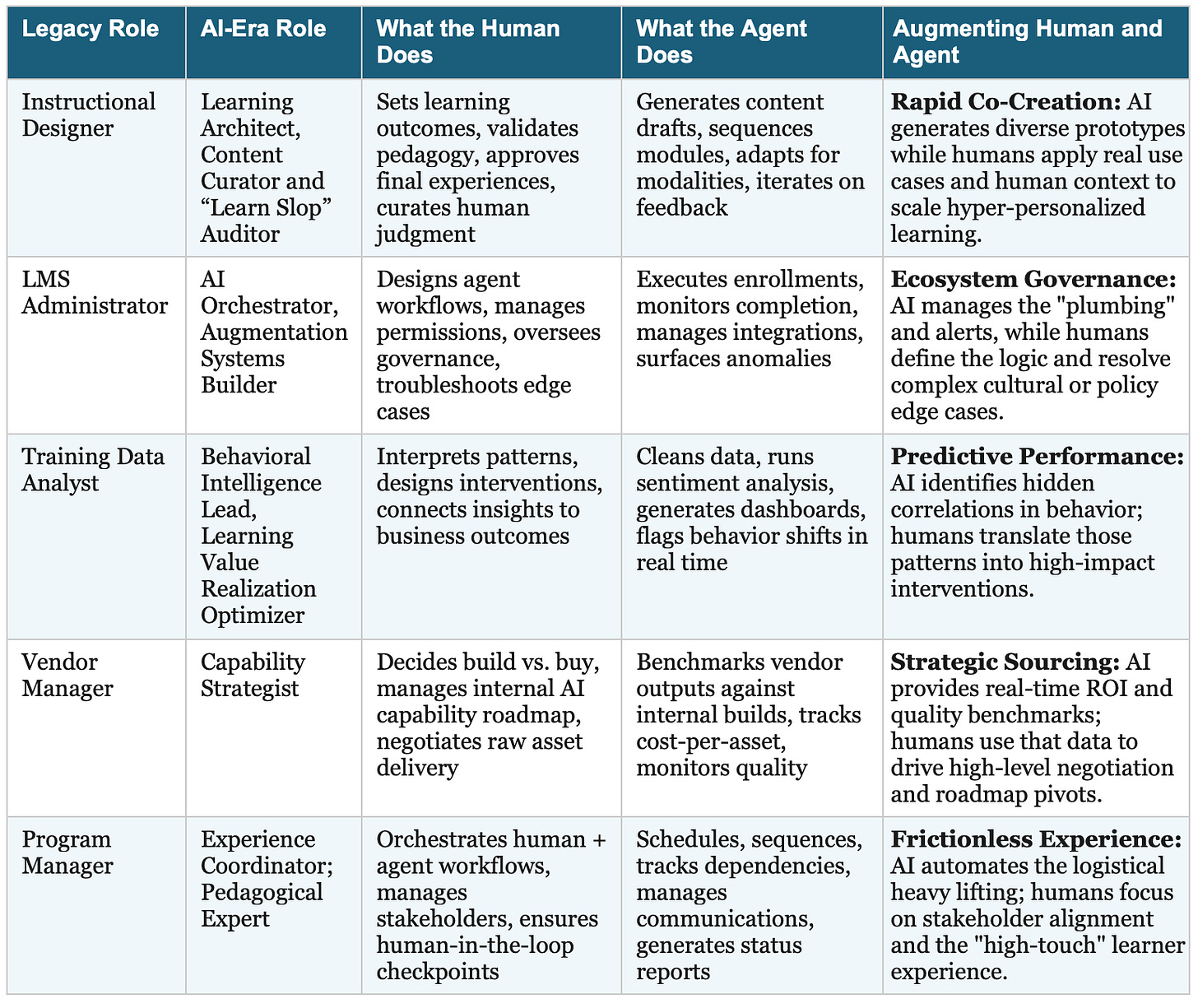

Deconstructing L&D Roles for the Agentic Era

The skills-and-tasks lens is valuable, but let’s be honest: it’s still a human-centric framework being applied to a world where agents are already “employed.” The real question isn’t just which tasks move to AI. It’s where agents will be deployed, where humans will be deployed, and where Humans + Agents will be employed together, and how L&D can coordinate and orchestrate starting from within.

Here is an example of what L&D role deconstruction could look like:

Inspired by the work of Dr. Philippa Hardman [see below]

Notice the pattern: the human roles shift toward judgment, coordination, and strategy. The agent roles take on execution, iteration, and monitoring. This is what Bersin means by the “Superworker”—not a person who works harder, but a person whose AI agents handle the mechanical work so they can focus on the work that actually requires being human. [4]

Refocus all human labor on change management aspects and immediately put any “steady state” items in the hands of AI agents. — Kyle Jackson

AI-Native Instructional Design: The Outputs Must Change Too

Redesigning the L&D team is only half the equation. The products that team builds—the courses, programs, and services—must also be AI-native. In this context, “AI-Native” means two things simultaneously: embedding AI into L&D’s everyday production work, and embedding AI into the learning experiences themselves.

In the Production Workflow

AI-native instructional design replaces the linear ADDIE assembly line with continuous, agent-augmented iteration. A Learning Architect using Cowork doesn’t start with a blank storyboard—they start by feeding the agent the entire corpus of previous program feedback, current business strategy documents, competency frameworks, and learner performance data. The agent synthesizes patterns, proposes learning architectures, and generates first drafts. The human refines, validates, and applies pedagogical judgment. A Synthesia survey found that 95.3% of instructional designers are already integrating AI tools into their workflow, but most are still using them for content generation rather than systemic design. [12]

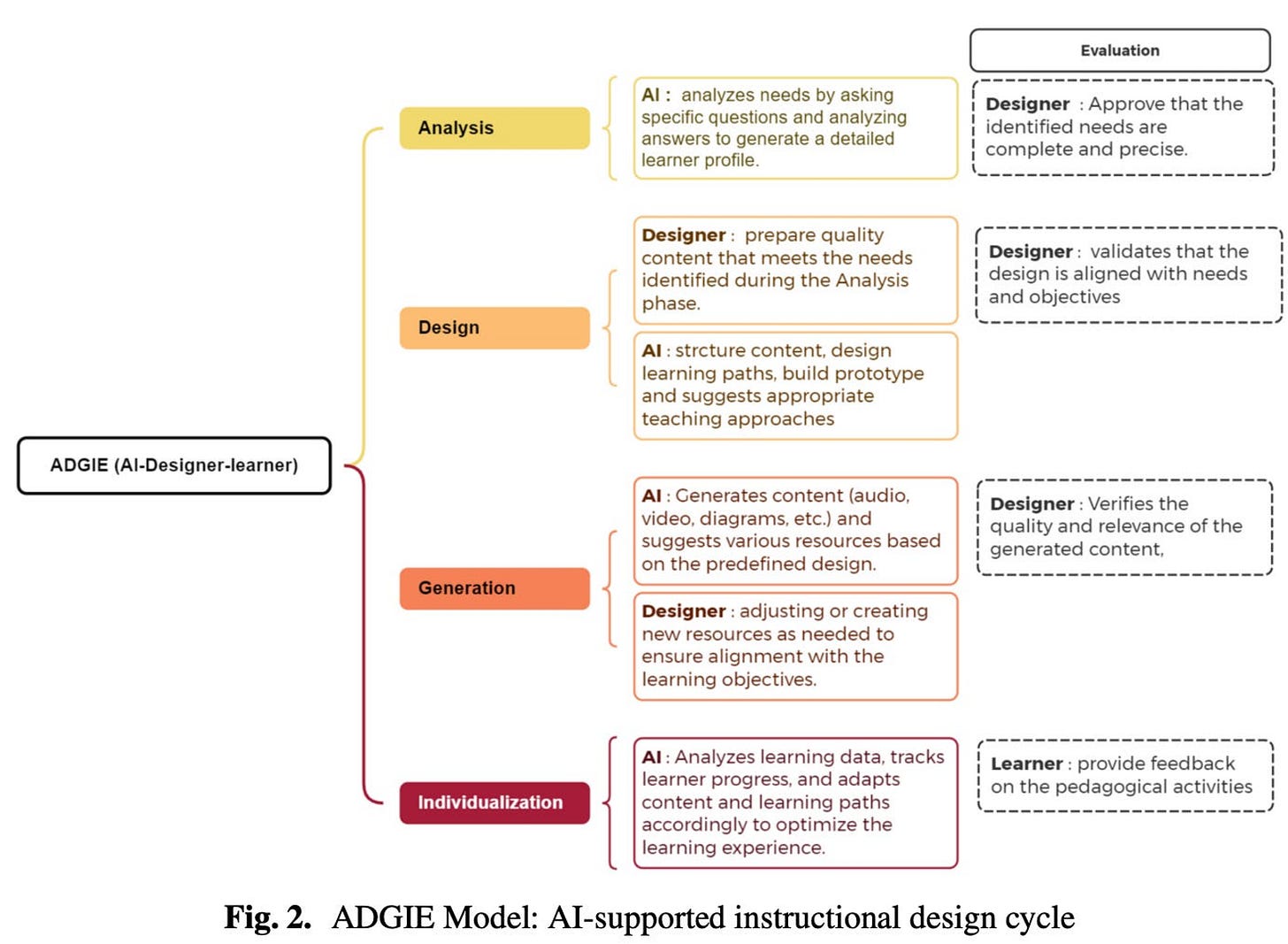

In Dr. Philippa Hardman’s exploration of modern approaches to instructional design, she summarizes the provocative work by a team of researchers in Morocco titled “Toward a New Instructional Design Methodology in the Era of Generative AI“. The paper proposes a new, or modernized model of ADDIE called ADGIE for Analysis-Design-Generation-Individualisation-Evaluation. According to Dr. Hardman in her work “From ADDIE to ADGIE?”, ADGIE is not as a replacement of ADDIE, but an “evolution of the model”.

“In the article, researchers puts forward the argument that Generative AI presents a long-awaited opportunity to innovate ADDIE and reimagine how we analyze, design, deliver & evaluate learning experiences. ADGIE provides that framework, aiming to integrate AI responsibly and effectively. In effect, ADGIE is a blueprint to help Instructional Designers and educators more broadly to integrate generative AI into their day to day work in a way that optimizes for value while mitigating risks.”

The model has a structure of five somewhat similar phases, yet as described, the embedding of AI and agentic approaches appear to accelerate productions without sacrificing learner and learning efficacy.

Toward a New Instructional Design Methodology in the Era of Generative AI

As Dr. Hardman continues: “Overall, I think that this paper and the concept of ADGIE captures a significant appetite within the profession for AI-enhancement of outdated and suboptimal processes. It also provide a much-needed QA of exactly how we are using AI in Instructional Design. It also provides a vocabulary and structure for an evolution that is already well underway.”

In the Learning Experience Itself

This is where most L&D teams are still thinking too small. AI-native learning experiences don’t just use AI to build the content, they embed AI agents directly into the learner’s experience. Think: a Reflection Agent that engages leaders 48 hours after a training session to measure behavioral adoption in real time. A Coaching Agent that surfaces personalized practice scenarios based on a learner’s actual work challenges. A Skills Diagnostic Agent that continuously assesses capability gaps and adjusts learning pathways without waiting for the next quarterly review.

As one eLearning Industry analysis put it: learners in 2026 are no longer just asking “What should I do?” They’re asking, “The system says this, but does it make sense here?” [13] AI-native instructional design teaches humans to work with AI—not just learn from content the AI produced. That’s a fundamentally different design philosophy, and it requires L&D teams who are themselves fluent in human-agent coordination.

The Change Management Blueprint: From Buy to Build

Tool selection and role redesign are necessary but not sufficient. The vendor-to-internal transition requires specific, deliberate change management across three organizational boundaries. A 2026 enterprise survey found that 61% of organizations have adopted or are testing AI in L&D, but adoption is uneven and hindered by gaps in AI literacy, unclear implementation plans, and weak infrastructure. [14] Here is how to cross those boundaries:

🎉 1. Procurement: From Content Licenses to Capability Investment

This is typically the hardest conversation because procurement teams are optimized for vendor management, not internal capability building. The shift requires moving budget from recurring content licenses (OpEx) to API/compute credits, internal talent development, and tool subscriptions (strategic CapEx). Start with a concrete pilot: renegotiate one vendor contract to include “Raw Asset Delivery”. That is, instead of buying a finished SCORM package, you buy the research, transcripts, and source materials to feed into your internal Cowork agents. Track cost-per-asset (internal vs. vendor) and time-to-delivery. When procurement sees that a ~$200/month Cowork subscription and human “controls” labor costs offset a $50K outsourced video series, the math sells itself.

🚔 2. Legal: IP Ownership and Agentic Liability

Two non-negotiable governance requirements: First, ensure all AI-generated outputs are 100% company-owned by updating contracts and establishing that work product created by AI agents under company direction constitutes company IP. Second, mandate Human-in-the-Loop (HITL) sign-off on all AI-generated learning content. This isn’t just legal protection—it’s quality assurance. If an AI-generated compliance module provides incorrect advice, you need a documented human review in the chain. Form a bi-weekly “AI Council” with Legal, IT, and L&D to review agentic liability—defining who is responsible when autonomous systems make errors in training content.

🫰3. Finance: The Value Realization Dashboard

Finance needs to see ROI in their language, not yours. Build a “Value Realization Dashboard” that tracks three metrics they care about: Time-to-Proficiency (how quickly new learning programs go from request to delivery), Cost-per-Asset (internal AI-augmented production vs. vendor quotes), and Capacity Recapture (hours returned to the L&D team for strategic work). Deloitte’s Tech Trends 2026 research makes the critical point: value comes from process redesign, not process automation. If you just layer AI onto existing workflows, you “weaponize inefficiency.” [15]

That is, if we measure success using old metrics, you’ll optimize for old outcomes.

L&D’s New Identity: Coordinator and Orchestrator

The most underestimated change requirement is emotional, not technical. Teams will feel threatened. Acknowledge it directly with what I call the “Vulnerability Clause”—an explicit, leader-communicated and (ideally) HR-sanctioned message that AI augmentation is about upgrading every role, not eliminating them.

The organizations pulling ahead need L&D to come in and help them not just with deconstruction of work to skills and tasks, but with more fundamental frameworks that, frankly, challenge ANY current thinking that preserves yesterday’s unchallenged logic despite realizing tomorrow’s infrastructure is already here.

Deloitte’s Tech Trends 2026 puts it bluntly: “Organizations must treat agents as a silicon-based workforce, with specialized management frameworks for onboarding, performance tracking, and FinOps cost management.” [15] If that’s true enterprise-wide, it’s doubly true inside L&D. Your team isn’t just managing human instructional designers anymore. You’re managing a mixed workforce of humans and agents, each with defined roles, quality standards, and escalation paths.

This is what “coordinate and orchestrate” actually means in practice:

Coordinate means designing the workflows where human judgment and agent execution intersect. Which review gates require human sign-off? What data feeds into the agent’s context? How do you quality-check agent outputs at scale?

Orchestrate means managing the portfolio, ideally the enterprise-wide programs (e.g., global), deciding which programs are fully AI-produced, which are human-led with agent support, which remain vendor-sourced, and how those decisions evolve as capabilities mature.

McKinsey’s own People function is already modeling this. Their proprietary AI platform Lilli has saved active users two to three million hours, with top agents saving consultants 20,000–34,000 hours annually. [16] They’re not just using AI to produce content faster. They’re redesigning how learning, credentialing, and leadership development work as integrated human-agent systems.

The Service Bureau Is Dead. The Orchestrator Is Born.

The organizations that win in 2026 won’t be the ones with the most advanced AI tools. They’ll be the ones whose L&D teams have the courage to redesign themselves first; to deconstruct their own roles, to renegotiate their own vendor relationships, to build their own agentic capabilities before asking the rest of the enterprise to do the same.

And this is far, far more than just about efficiencies and cost controls.

So, choose your tool. Cowork for the narrative and strategic work. Codex for the engineering and analytics. Or leverage whatever is already sanctioned and approved by IT. Then do the harder thing: redesign your team, renegotiate your vendor model, and lead the transition you keep telling everyone else to make.

These are the best days of our L&D vocation. Not exclusively due to AI. There’s a new sense of self occurring. A rediscovery of purpose.

If anything, AI is a requisite mirror allowing us to see who we really are, what is really happening, why we’re really here, and to accelerate achievement.

Acknowledging the use of Gemini, Perplexity, ChatGPT, and Claude for research, formatting, challenging assumptions and aiding the creative process.

The views and opinions expressed in this article are solely those of the author and do not necessarily reflect the views of the author’s employer or any affiliated organizations.

Licencing

Unless otherwise noted, the contents of this series are licensed under the Creative Commons Attribution 4.0 International license; CC0 1.0 Universal (CC0 1.0) Public Domain Dedication.

Should you choose to exercise any of the 5R permissions granted you under the Creative Commons Attribution 4.0 license, please attribute me in accordance with CC’s best practices for attribution.

If you would like to attribute me differently or use my work under different terms, contact me at https://www.linkedin.com/in/marcsramos/.

Appendix

[A] Claude Cowork 11 Plugins (Launch, January 2025)

References

[1] Anthropic. (2026, January 12). Introducing Claude Cowork [Blog post].

[2] FinancialContent. (2026, February 6). Anthropic’s ‘Claude Cowork’ release triggers $285 billion ‘SaaSpocalypse.’ FinancialContent

[3] OpenAI. (2026, February). Codex changelog: GPT-5.3-Codex. Retrieved from developers.openai.com

[4] The Josh Bersin Company. (2026, January 21). In 2026 AI-powered superagents will radically change HR [Press release]. joshbersin.com

[5] McKinsey & Company. (2025, January). Superagency in the workplace: Empowering people to unlock AI’s full potential at work. mckinsey.com

[6] InfoQ. (2026, January). Anthropic announces Claude Cowork. infoq.com

[7] MarkTechPost. (2026, February 5). Anthropic releases Claude Opus 4.6 with 1M context. marktechpost.com

[8] OpenAI. (2026, February). Introducing the Codex app [Blog post]. openai.com

[9] Deloitte. (2025). From jobs to skills to outcomes: Rethinking how work gets done. Deloitte Insights. deloitte.com

[10] Jesuthasan, R. (2025). Want AI-driven productivity? Redesign work. MIT Sloan Management Review. MIT Sloan

[11] Fortune. (2026, January 22). Deloitte to scrap traditional job titles as AI ushers in a ‘modernization.’ fortune.com

[12] Synthesia. (2026). AI in Learning & Development report 2026. synthesia.io

[13] eLearning Industry. (2026, January). Learning design in 2026: AI learns fast, humans learn wise. elearningindustry.com

[14] Intellum. (2026). 4 AI trends in L&D to prepare for in 2026. intellum.com

[15] Digital CxO. (2025, December 15). Deloitte Tech Trends 2026: Moving from AI experimentation to enterprise impact. digitalcxo.com

[16] McKinsey & Company. (2025). AI in the people function: Building leaders, improving processes, creating value. mckinsey.com